GNU wget is a very useful utility that can download files over HTTP, HTTPS and FTP. Downloading a single file from a remote server is very easy. You just have to type:

$ wget -np -nd -c -r http://server/path/to/file

That’s all. The wget utility will start downloading the remote “file” and save it in the current directory with the same name.

When downloading a single file this works fine and will often be enough to do the job at hand easily, without a lot of fuss. Fetching multiple files is also easy with a tiny bit of shell plumbing. In a Bourne-compatible shell you can store the URLs of the remote files in a plain text file and then type:

$ while read file ; do \

wget -np -nd -c -r "${file}" ; \

done < url-list.txt

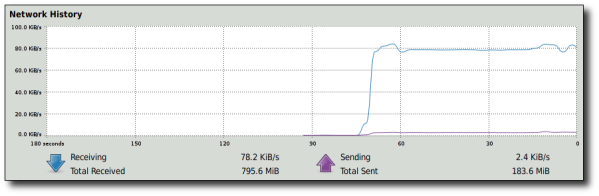

This small shell snippet will download the files one after the other, but it is a linear process. The second file will start downloading only after the first one has finished. The utilization of your connection will probably be less than optimal. Indeed, while fetching large files from a remote server, my DSL connection at home could fetch only about 78 Kbytes/sec when I was running one wget instance at a time:

One of the ways to achieve better download speeds for multiple files is to use multiple parallel connections. This is precisely the idea behind download managers: programs that can be fed a list of URLs and fetch them in parallel.

Spawning multiple child processes and watching them until they stop running is not a very difficult problem. As an experiment I tried writing a small process monitor in Python; one that can act as a “download manager” by spawning multiple wget instances and keep spawning more instances as they finish their work.

The main parts of a script like this would be:

- Read a list of URLs from one or more files

- Build a list of “wget jobs” that have to be completed

- Spawn an initial set of N jobs (where N is the maximum number of parallel downloads)

- Watch for children processes that exit

- While there are more jobs, spawn a new one every time a child exits

The following script implements a scheme like this in relatively simple Python code:

#!/usr/bin/env python

#

# Copyright (c) 2010 Giorgos Keramidas.

# All rights reserved.

#

# Redistribution and use in source and binary forms, with or without

# modification, are permitted provided that the following conditions

# are met:

# 1. Redistributions of source code must retain the above copyright

# notice, this list of conditions and the following disclaimer.

# 2. Redistributions in binary form must reproduce the above copyright

# notice, this list of conditions and the following disclaimer in the

# documentation and/or other materials provided with the distribution.

#

# THIS SOFTWARE IS PROVIDED BY THE AUTHOR AND CONTRIBUTORS ``AS IS'' AND

# ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE

# IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE

# ARE DISCLAIMED. IN NO EVENT SHALL THE AUTHOR OR CONTRIBUTORS BE LIABLE

# FOR ANY DIRECT, INDIRECT, INCIDENTAL, SPECIAL, EXEMPLARY, OR CONSEQUENTIAL

# DAMAGES (INCLUDING, BUT NOT LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS

# OR SERVICES; LOSS OF USE, DATA, OR PROFITS; OR BUSINESS INTERRUPTION)

# HOWEVER CAUSED AND ON ANY THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT

# LIABILITY, OR TORT (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY

# OUT OF THE USE OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF

# SUCH DAMAGE.

import os

import sys

children = {}

maxjobs = 8 # maximum number of concurrent jobs

jobs = [] # current list of queued jobs

# Default wget options to use for downloading each URL

wget = ["wget", "-q", "-nd", "-np", "-c", "-r"]

# Spawn a new child from jobs[] and record it in children{} using

# its PID as a key.

def spawn(cmd, *args):

argv = [cmd] + list(args)

pid = None

try:

pid = os.spawnlp(os.P_NOWAIT, cmd, *argv)

children[pid] = {'pid': pid, 'cmd': argv}

except Exception, inst:

print "'%s': %s" % ("\x20".join(argv), str(inst))

print "spawned pid %d of nproc=%d njobs=%d for '%s'" % \

(pid, len(children), len(jobs), "\x20".join(argv))

return pid

if __name__ == "__main__":

# Build a list of wget jobs, one for each URL in our input file(s).

for fname in sys.argv[1:]:

try:

for u in file(fname).readlines():

cmd = wget + [u.strip('\r\n')]

jobs.append(cmd)

except IOError:

pass

print "%d wget jobs queued" % len(jobs)

# Spawn at most maxjobs in parallel.

while len(jobs) > 0 and len(children) < maxjobs:

cmd = jobs[0]

if spawn(*cmd):

del jobs[0]

print "%d jobs spawned" % len(children)

# Watch for dying children and keep spawning new jobs while

# we have them, in an effort to keep <= maxjobs children active.

while len(jobs) > 0 or len(children):

(pid, status) = os.wait()

print "pid %d exited. status=%d, nproc=%d, njobs=%d, cmd=%s" % \

(pid, status, len(children) - 1, len(jobs), \

"\x20".join(children[pid]['cmd']))

del children[pid]

if len(children) < maxjobs and len(jobs):

cmd = jobs[0]

if spawn(*cmd):

del jobs[0]

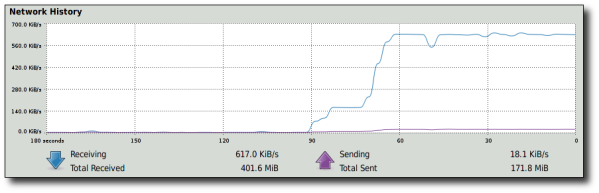

This is a very small wrapper around wget, but the difference it has in download speed is quite dramatic. I tried running 8 parallel wget processes at the same time, by setting maxjobs=8 in the source of the script itself, and downloaded a set of relatively large files by typing:

$ ./pget.py url-list.txt

Gnome’s network monitor showed that the download speed of the 8 parallel wget processes started slowly increasing and it reached almost 8x the download speed of one wget process (617 Kbyte/sec vs. (8 * 78 Kbytes/sec) = 624 Kbytes/sec) less than a minute after I started the downloads:

That’s an improvement that is quite impressive. I think I’ll be using at least maxjobs=3 or 4 whenever I have to fetch multiple files from one or more locations :-)

Ωραίο φαίνετε το python script, αλλά να σου πω την αλήθεια μου, είναι πολύ μεγάλο για να κάτσω να το διαβάσω. Δε θα ήταν ποιο απλό κάτι σαν το παρακάτω;

max=4; for i in `seq 1 100`; do until [ `jobs | wc -l` -le $max ]; do sleep 1; done; echo $i && sleep 3 & done

Το έγραψα έτσι για να το τεστάρω εύκολα ως concept. Ακολουθεί untested version για τη δικιά σου περίπτωση:

max=4; cat url-list.txt | while read file; do until [ `jobs | wc -l` -le $max ]; do sleep 1; done; wget -np -nd -c -r “${file}” & done

Έχει το μειονέκτημα ότι τσεκάρει ανά δευτερόλεπτο αν υπάρχει διαθέσιμο slot ελεύθερο, αλλά πρακτικά δεν πιστεύω ότι πειράζει…

Steve: Θέλει job control το built-in command “jobs” και σε ορισμένα sh(1) implementations είναι είτε κλειστό by default το job control σε “script mode” ή δεν υπάρχει καν τρόπος να ανοίξει. Δεν είναι άσχημη ιδέα πάντως :-)

Brilliant! Can be used to parallelize any script! :)

excellent! thanks for the post!

No error handling but it works in Python 3.

from multiprocessing import Pool import os import sys children = {} maxjobs = 8 # maximum number of concurrent jobs jobs = [] # current list of queued jobs # Default wget options to use for downloading each URL wget = "wget -q -nd -np -c -r " # Spawn a new child from jobs[] and record it in children{} using # its PID as a key. def call_command(command): os.system(command) if __name__ == "__main__": # Build a list of wget jobs, one for each URL in our input file(s). for fname in sys.argv[1:]: with open(fname, 'r') as f1: for line in f1: cmd = wget + line.strip() jobs.append(cmd) print("{} wget jobs queued".format(len(jobs))) pool = Pool(processes=8) pool.map(call_command, jobs)I don’t know how to preserve indentation. :$

update (keramida): WordPress makes it hard to preserve code formatting in comments. I fixed it through its admin interface.

Heh, funny that Python (more than other languages) is vulnerable to indentation losses across pastes :P

The indentation is still there, including the original newlines (myle correctly used a CODE element around the text). It just makes it difficult to use PRE, which also preserves newlines when the text is displayed. Oh well :)

myle: I haven’t installed Python 3.X yet, because I use Mercurial a lot, both for personal and job-related projects, and I’m not sure if it works in Py3. I’ll keep the script for later though, thanks a lot!

Neat, although I still prefer serial downloads. Btw, Wget supports passing a list of files to download with the -i option: wget -i links.txt

Yep. The -i option would work fine for serialized multi-file downloads. I was just curious to see if I could parallelize things a bit without having to install a GUI-based download manager. It seems to have worked nicely enough :-)

Πολύ ωραίο Γιώργο, το χρησιμοποιώ πολύ συχνά. Έκανα μια μικροαλλαγή ώστε να μπορείς να περάσεις παραμέτρους στο wget. Το έχω ανεβάσει εδώ http://gist.github.com/285136/

Ευχαριστώ Χρήστο! Η ιδέα με το “––” για έξτρα wget args είναι φανταστική :-)

Ναι το κολπάκι με το “–” είναι πολύ βολικό! Η ιδέα είναι από το git, όπου το “–” χρησιμοποιείται για να ξεχωρίσει revisions από αρχεία σε περίπτωση που έχουν το ίδιο όνομα (π.χ. στο git diff). Έκανα μια ακόμα προσθήκη για να μπορεί να πάρει urls από το stdin.

Note that xargs can do this for you. To run 8 wgets in parallel:

cat urls | xargs -n 1 -P 8 wget

Amazing! We should read man pages more often..

Heh, excellent trick. I’ve never used xargs -P, but I foresee many interesting uses in the near future :)

Works great. I use this to download rapidshares, so i have a bashfile do some things with cookies first, and then call this python script.

Thanks.

I think you can also try axel

You are welcome, Marc. I will have a look at axel too. It looks rather interesting :-)

Hi, there is a simple bash line that can be useful.

cat | xargs -n 1 -P wget -nv

Regards!

hello

can u write this code with php?

if u can please write it

tank u

Nope, sorry. I don’t “do” PHP if I can help it.

The main idea is quite simple though. It should be possible to “port” the Python code to PHP in a few minutes.

Somehow when i use this script it is simply downloads all the urls in parallel without any restriction on the number of jobs. I tried indentation of code again but may be i made a mistake in the tab levels.

Pingback: lemul0t's blog » Blog Archive » En cour : Introduction au script bash : Communication HTTP

awesome. this is what i was looking for.

that xarg thing is gr8..

yea, i seldom look into man pages of different commands when i are sure of one.. after that i come to know how childish i was.

i made a script to download my favorite newspaper .

well. the newspaper that i want comes only as pages. so i had to download pages using loop and wget -i and then pdftk it.

and was looking for something to parallize the downloads. and this server the purpose.

thanks john.

thanks a lot.

good effort by the author.

:)

Nice script. most of the time I stupidly use :

for down in `cat downloadlist`; do wget -bc –load-cookies=cookies.txt $down; done

most of the time some files is not fully downloaded and some of them not downloaded at all so I still have to run wget -c –load-cookies=cookies.txt -i downloadlist

I try to find web based download manager which can be run in Linux. do you have suggestion?

This script works so well! My bash version wasn’t nearly as efficient. Thank you so much!

Hello there! This post could not be written any better!

Reading through this post reminds me of my good old room mate!

He always kept talking about this. I will forward this page to

him. Fairly certain he will have a good read. Many thanks

for sharing!

there’s also parallel(1) from the moreutils package, eg. parallel -j 4 wget — [list of urls]

Sad !!!!

Exactly the script I want… but under windows, no way to use spawn / spawnlp….

Do you know how i can update it ?

Thanks

It may be possible to replace spawn with ‘import subprocess’. I don’t really know if subprocess works better in Windows environments, but it’s supposedly much better than the low-level os/sys code.